NVIDIA has announced the NVIDIA Maxine platform provides developers with a cloud-based suite of GPU-accelerated AI video conferencing software to enhance streaming video — the internet’s No. 1 source of traffic.

NVIDIA Maxine is a cloud-native streaming video AI platform that makes it possible for service providers to bring new AI-powered capabilities to the more than 30 million web meetings estimated to take place every day. Video conference service providers running the platform on NVIDIA GPUs in the cloud can offer users new AI effects — including gaze correction, super-resolution, noise cancellation, face relighting and more.

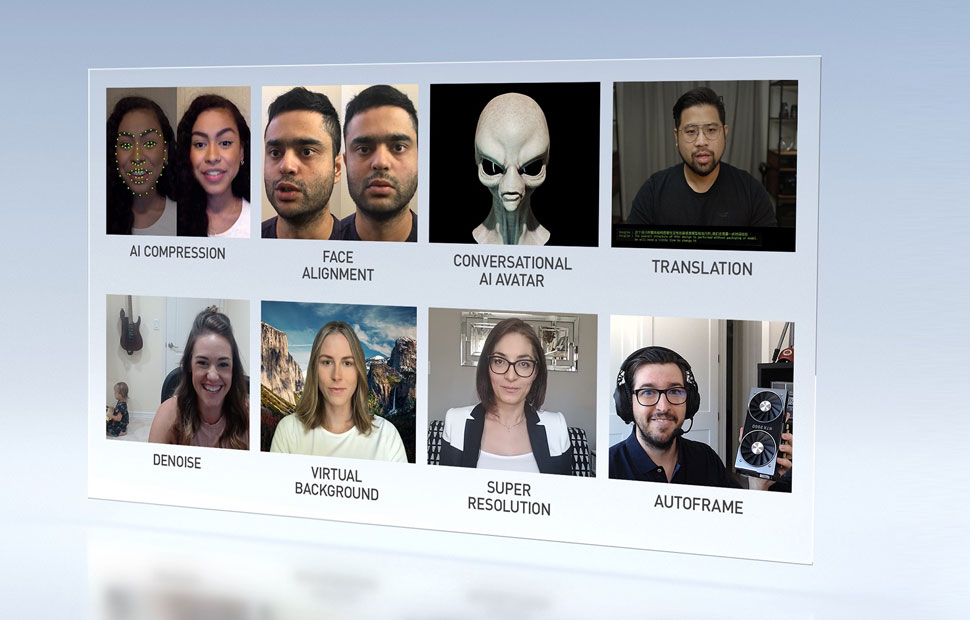

The NVIDIA Maxine platform reduces how much bandwidth is required for video calls. Instead of streaming the entire screen of pixels, the AI software analyzes the key facial points of each person on a call and then intelligently re-animates the face in the video on the other side. This makes it possible to stream video with far less data flowing back and forth across the internet.

Using this new AI-based video compression technology running on NVIDIA GPUs, developers can reduce video bandwidth consumption down to one-tenth of the requirements of the H.264 streaming video compression standard.

This cuts costs for providers and delivers a smoother video conferencing experience for end users, who can enjoy more AI-powered services while streaming less data on their computers, tablets and phones.

AI Features Improve Video Conferencing Experiences

New breakthroughs by NVIDIA researchers that will be included in Maxine make video conferencing feel more like face-to-face conversation. Video conference service providers will be able to take advantage of NVIDIA research in GANs, or generative adversarial networks, to offer a variety of new features.

For example, face alignment enables faces to be automatically adjusted so that people appear to be facing each other during a call, while gaze correction helps simulate eye contact, even if the camera isn’t aligned with the user’s screen. With video conferencing growing by 10x since the beginning of the year, these features help people stay engaged in the conversation rather than looking at their camera.

Developers can also add features that allow call participants to choose their own animated avatars with realistic animation automatically driven by their voice and emotional tone in real time. An auto frame option allows the video feed to follow the speaker even if they move away from the screen.

Using conversational AI features powered by the NVIDIA Jarvis SDK, developers can integrate virtual assistants that use state-of-the-art AI language models for speech recognition, language understanding and speech generation. The virtual assistants can take notes, set action items and answer questions in human-like voices. Additional conversational AI services such as translations, closed captioning and transcriptions help ensure participants can understand what is being discussed on the call.